medium

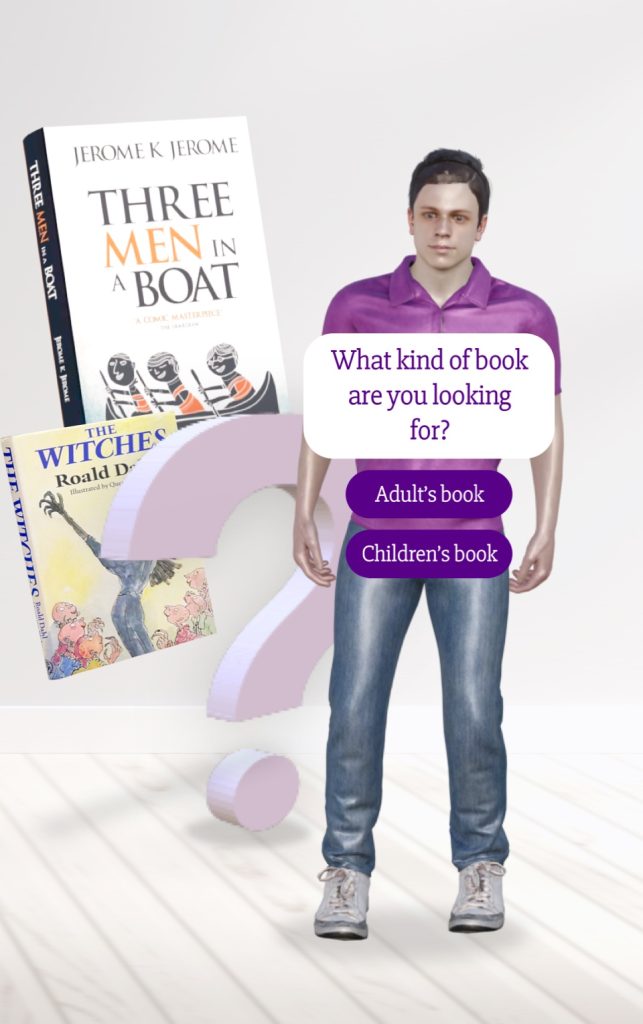

3D digital assistant in webAR

Turn a 3D avatar into a virtual assistant that provides programmed answers to your customers by offering self-managed information through a decision tree based on questions and answers.

Experience overview 📖

A webAR experience whose objective is to inform and serve the user by offering contextualized information to the business or the moment in which he/she is. Based on a decision tree on question and answer that can be adapted to any situation and accompanied by a 3D avatar that allows us to humanize the experience in some way.

Visualize this experience

Scan the QR code and hold your device pointing to the marker.

Visualize this experience

Scan the QR code and enjoy the AR experience.

Uses and benefits 🌱

It is a solution that covers different objectives related to the user experience, focused on supporting the access to information in an autonomous way and the enrichment of customer touch points. Applicable to multiple business sectors, digital assistants could be developed focused on beauty, wine and food product recommendations, as well as meeting points in tourism, leisure and entertainment that serve to nurture the user experience.

Features and tips💡

The following features have been used to shape this experience:

3D models and animations.

Different types of resources or Assets can be added to the scenes in Onirix. One of the One of the most used resources in this type of experience are 3D models. With this Asset format it is possible to add elements that include fully animated scenes. These animations can be activated at different times within the Onirix scene editor, and therefore, these animations can be used to tell stories.

In the case of this experience, several 3D models are available:

- Avatar: 3D character model with several movement animations that will accompany us throughout the experience as an expert recommender.

- Thematic elements: we find books and elements related to the recommendation as we can always edit according to the themes of the experience.

Images and graphics

Another of the formats used are flat PNG images for the construction of clickable and interactive elements. The experience offers a series of boxes with questions and answers that allow us to interact and discover information through book recommendations.

For more information see our documentation on 3D models in Onirix.

Code editor: HTML, CSS and JavaScript. Embed SDK.

For this experience we have made use of the code editor, where we apply the information based on images and UI graphics that allows us to carry out the interaction and conversation with the avatar. It is important to highlight the role of the decision tree, it is a pre-established information connection that nourishes the experience and the information base that we will find implemented within the virtual assistant. It is fully editable and adaptable to any business or context being able to define and control the questions and answers at the moment in the same way that happens with a web assistance chatbot. The logic to display the different possible scenarios is implemented through the Embed SDK.

Here to access the online documentation of the code editor.

Here to access the Embed SDK documentation.

Surface recognition

The webAR experience has been developed with surface detection, allowing us to use surfaces as markers. This is a great advantage if we want to allow the user to decide where to place the content without the need for a reference image, or if we intend to work with a large number of models, where scalability and model size accuracy are of great importance. In the case of Android devices, webXR technology is used for surface recognition, while for iOS, we rely on the development we have done for SLAM, which perfectly solves the incompatibilities of Spatial AR on the web for iPhone and iPad.

Access here to the world tracking AR documentation